Max Tokens Settings Improvements

I have all my models set to output the maximum number of tokens allowed by the model. For example, I have the max tokens setting for Gemini 2.5 Pro set to 65536 and Claude Opus 4 set to 32000.

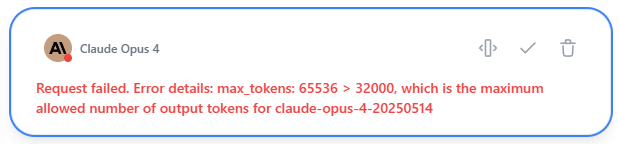

The problem is that when I use multiple models to respond to a prompt, the max tokens parameter sent to each model is that of the first model that is selected. If I select Gemini 2.5 Pro and then Claude Opus 4, then I get an error from Claude Opus 4 because the max tokens are set too high. The error is shown below. If I select Opus 4 then Gemini 2.5 then it works okay, but it's difficult remembering which model has the lowest max tokens setting.

This needs to be fixed. Some suggestions include:

1. The max tokens parameter sent to each model is the max number of tokens for that specific model. In the example above, the max token setting sent to Gemini would be 65536 and the max token setting sent to Opus would be 32000.

2. Set the max tokens for the chat to be the max amount allowed by the model with the lowest setting. In the example above, the max token setting sent to all models, regardless what order they are added when create a multi-model response, is 32000.

Ultimately, I wish I could just set all the models to the max allowed tokens and never have to deal with it again in any situation. That would be my ideal solution.

Please authenticate to join the conversation.

Open

Feature Request

10 months ago

Subscribe to post

Get notified by email when there are changes.

Open

Feature Request

10 months ago

Subscribe to post

Get notified by email when there are changes.