Custom System Per-Model in Override Parameters

Right now, under Override Global Model Parameters, we can only set a single global system prompt that applies to all models. This is very limiting because:

Each model often needs a different optimized system prompt to perform at its best.

Example: For GPT-5, I might set the system prompt to

Knowledge cutoff: 2024-06.For Claude, I would want

Knowledge cutoff: 2025-01.For GPT-4.1, I may use a more optimized or simplified structure that works better for that model.

Using one global prompt for every model is not ideal, some prompts actually make performance worse on other models.

While it’s possible to create separate agents with different system prompts, that’s not practical because it means creating and managing a new agent for every single model variation.

Proposed Solution

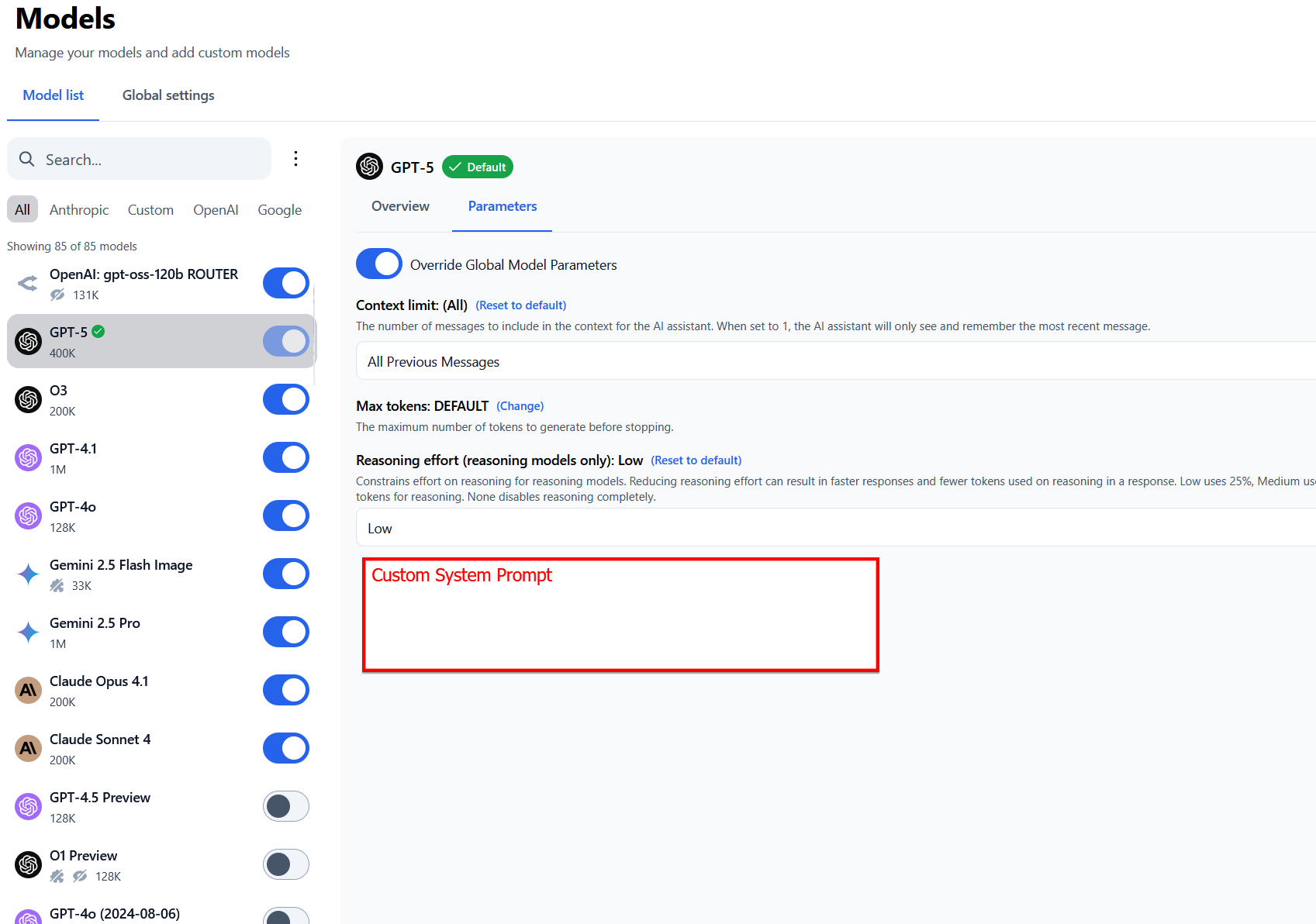

Add a Custom System Prompt field per model in the Override Parameters section (where context limit, max tokens, reasoning effort are already set).

Handle edge case when a user switches models mid-conversation, the system prompt should automatically change to the one defined for that model without needing to restart the conversation.

Please authenticate to join the conversation.

Completed

Feature Request

8 months ago

Subscribe to post

Get notified by email when there are changes.

Completed

Feature Request

8 months ago

Subscribe to post

Get notified by email when there are changes.